Individual Research Projects

There were 15 Early Stage Researchers in the CLEOPATRA programme. There are details of their projects below:

1. Fact extraction and cross-lingual alignment (Gottfried Wilhelm Leibniz University Hannover, LUH)

2. Interactive user access models to cross-lingual information (Gottfried Wilhelm Leibniz University Hannover, LUH)

3. Crowd quality and training in hybrid multilingual information processing and analytics (King’s College London, KCL)

4. Incentives design for hybrid multilingual information processing and analytics (King’s College London, KCL)

5. Fact validation across multilingual text corpora (Rheinische Friedrich-Wilhelms-Universität Bonn, UBO)

6. Interactive multilingual question answering (Rheinische Friedrich-Wilhelms-Universität Bonn, UBO)

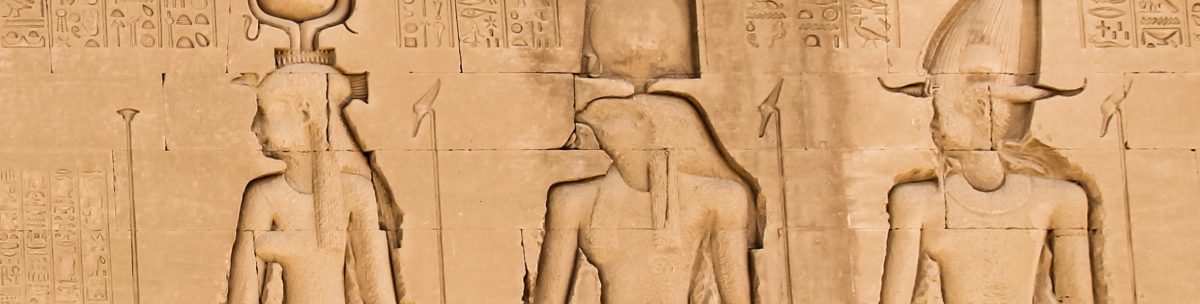

7. Relations of textual and visual information (TIB Hannover – German National Library of Science and Technology, TIB)

8. Contextualisation of images in multilingual sources (TIB Hannover – German National Library of Science and Technology, TIB)

9. National and transnational media coverage of European parliamentary elections, 2004-2014 (University of London, UoL)

10. Nationalism, internationalism and sporting identity: the London and Rio Olympics/Paralympics (University of London, UoL)

11. Information propagation with barriers (Institut Jožef Stefan ,JSI)

12. Cross-lingual news reporting bias (Institut Jožef Stefan, JSI)

13. Multilingual Wikipedia as ‘first draft of history’ (University of Amsterdam, UvA)

14. NLP for under-resourced languages (Sveuciliste u Zagrebu Filozofski Fakultet – University of Zagreb, FFZG)

15. Cross-lingual sentiment detection (Sveuciliste u Zagrebu Filozofski Fakultet – University of Zagreb, FFZG)

18. Multi Modal Fact Validation (TIB Hannover – German National Library of Science and Technology, TIB)

19. Cross Cultural Comparison of Russian State Controlled and Independent Media (University of Amsterdam, UvA)

| ESR1: Tin Kuculo |  |

PhD enrolment: LUH | |||

| Project Title: Fact extraction and cross-lingual alignment (WP4 – Event-centric cross-lingual Information processing) | |||||

| Objectives: Extract and interlink mentions of related facts and their multilingual context and establish their semantic and temporal relations in comparable corpora by leveraging hybrid computational methods while utilizing NLP and ML-based technologies. | |||||

| Impact: QuoteKG was developed, a multilingual knowledge graph of quotes, containing nearly a million quotes across 55 languages from over 69,000 public figures, to aid in understanding world history and influencing societal discourse. Wikiquote was used as a primary source to address the challenges of context scarcity in quote collections, language variation, and quote alignment, and a language-agnostic transformer model for cross-lingual alignment was employed. QuoteKG, with its rich metadata and context for each quote, facilitates cultural and historical research, demonstrated by its application in curating event quote collections and conducting event-centric analyses. | |||||

| Future research: The Semantic Web and NLP communities have developed different methods for event-centric information extraction and representation. Knowledge graphs focus on named events, while NLP-based approaches focus on finer-grained, self-contained events. FollowUp work aims to incorporate the strengths of both Semantic Web and NLP perspectives and presents a new approach, Ontology-Guided Event Extraction (O-GEE), to address challenges associated with event ontologies, the extraction of relevant event relations, and the alignment of event ontologies in NLP and the Semantic Web.

A two-step approach is planned to extract fine-grained event locations from large-scale knowledge graphs, such as Wikidata, DBpedia, and YAGO. The method first integrates a geographic knowledge graph into an event knowledge graph to counteract the lack of detailed location information, and then employs a graph neural network on the combined event knowledge graph to extract precise event locations. |

|||||

| ESR2: Sara Abdollahi |  |

PhD enrolment: LUH | |||

| Project Title: Interactive user access models to cross-lingual information (WP5 – Hybrid computation, user interaction and question answering) | |||||

| Objectives: Develop user interaction models that enable users to efficiently and effectively access extracted event-centric multilingual information and its context and analyse language-specific differences. | |||||

| Impact: The LaSER language-specific event recommendation algorithm was developed to help users (mostly researchers and journalists) supporting cross-language exploration, Web navigation and exploratory search. | |||||

| Future research: Document retrieval for events using query expansion and knowledge graphs; and building event-centric collections from web archives, as the result of my research during my secondment at the British Library. | |||||

| ESR3: Gabriel Amaral |  |

PhD enrolment: SOTON / KCL | |||

| Project Title: Crowd quality and training in hybrid multilingual information processing and analytics (WP5 – Hybrid computation, user interaction and question answering) | |||||

| Objectives: Design a mixed-crowdsourcing workflow to produce high-quality multilingual data for knowledge graphs. | |||||

| Impact: The research has made great strides in the Wikidata Research community in uncovering the impact that the quality of references (for Wikidata claims) has on the perceived quality of the information itself. We explored how humans perceive the quality of such references, how to best measure it, what is the current state of such quality metrics in current Wikidata, and direct steps that could be done to improve it. This section of our research was extremely well received by the community, being granted a Wikimedia Foundation Research Award of the Year. We followed this up by constructing an automated pipeline to assist in the maintenance of Wikidata (or any KG) references. We also explored how such a model can be explained in order to gain the trust and understanding of its users. | |||||

| Future research: Follow-ups for this research would look into other ways to automate fully or partially the measurement of relevant metrics for reference quality. This could focus on the main metrics we have established (relevance, authoritativeness, and ease of use), or others. NLP models are making huge advancements (e.g. ChatGPT), so applying these techniques to this task seems like a natural next step. | |||||

| ESR4: Elisavet Koutsiana |  |

PhD enrolment: KCL | |||

| Project Title: Incentives design for hybrid multilingual information processing and analytics (WP5 – Hybrid computation, user interaction and question answering) | |||||

| Objectives: Understand what motivates people to engage in knowledge graph creation and curation activities, across language contexts, and devise incentive mechanisms to foster useful knowledge graph contributions. | |||||

| Impact: The contribution investigated how members of the community work and interact through their discussions providing valuable inputs to peer production communities, particularly the collaborative knowledge graph communities. The research study confirmed how important discussions are as a source of insights regarding members’ collaboration and provided frameworks for studying online discussions in collaborative knowledge graph projects, like qualitative analysis coding schemes for investigating the main topics discussed, as well as for identifying argumentation patterns and the role of discussion participants in controversial discussions. An overview of the complete corpus of Wikidata discussions was provided, as well as publicly available code for descriptive statistical analysis of the corpus. The research suggests design improvements and topics for follow-up studies in knowledge graph quality and editor engagement. | |||||

| Future research: Future directions of this research could investigate: how decisions are made and how they impact the knowledge graph construction; and how discussions and members’ interaction impact the quality of the knowledge graph | |||||

| ESR5: Jason Armitage |  |

PhD enrolment (2019-20): UBO | |||

| Project Title: Fact validation across multilingual text corpora (WP4 – Event-centric cross-lingual Information processing) | |||||

| Objectives: Develop hybrid methods for cross-lingual fact validation and leverage multilingual distributed sources to provide a more complete set of source candidates in order to validate the facts. | |||||

| Expected Results: Methods for hybrid cross-lingual fact validation using heterogeneous information sources. | |||||

| Unimodal systems that process natural language predominate in research on fact validation – but reporting on events are rarely comprised of text alone. The co-existence of multiple modalities creates an opportunity to implement machine learning systems that retrieve, combine, and model diverse inputs. Fact validation systems that process multiple modalities are able to assess additional evidence, learn rich representations, and conduct supplementary analyses on source data.

Fact validation is composed of the sub-tasks of parsing inputs, retrieving evidence, and inferring relations between the two. In related applications, approaches that compose a design – after breaking problems down into steps – are better suited to tasks with composite structure. Learning on multiple modalities introduces an additional level of complexity for monolithic architectures. This research aims to implement and evaluate systems optimised to process diverse data and adapt to the multiple sub-tasks comprising the fact validation pipeline. |

|||||

| ESR6: Endri Kacupaj |  |

PhD enrolment: UBO | |||

| Project Title: Interactive multilingual question answering (WP5 – Hybrid computation, user interaction and question answering) | |||||

| Objectives: Train neural networks to convert natural language queries to a formal query language, which will then be answered using existing knowledge bases. Enable efficient user interaction and feedback to enhance results. | |||||

Impact: Conversational Question Answering over Knowledge Graphs with Answer Verbalization. The impact of the research can be summarised as follows:

For Conversational QA, we studied whether the availability of entire dialog history, domain information, and verbalized answers can act as context sources in determining the ranking of KG paths while retrieving correct answers. We proposed an approach that models conversational context and KG paths in a shared space by jointly learning the embeddings for homogeneous representation. |

|||||

Further research: The contributions of this research pave the way for a more extensive research agenda that will foster further research. A few of such directions are enumerated below:

|

|||||

| ESR7: Gullal Singh Cheema |  |

PhD enrolment: TIB | |||

| Project Title: Relations of textual and visual Information (WP4 – Event-centric cross-lingual Information processing) | |||||

| Objectives: Develop and research (deep) learning systems that are able to 1.) find the paragraphs and sentences in a text which are relevant to image content, and 2.) predict the granularity and semantic level of text-image relations. | |||||

Impact:

|

|||||

| Further research: A follow up on multimodal claims is already part of Checkthat! 2023 challenge on Check Worthiness, Subjectivity, Political Bias, Factuality, and Authority of News Articles and Their Sources. The new test dataset, part of the challenge, contains tweets from 2021 and 2022. A few papers are planned for computational research on image-text relations, multimodal hate speech and analyzing large multimodal language models. | |||||

| ESR8: Golsa Tahmasebzadeh |  |

PhD enrolment: TIB | |||

| Project Title: Contextualisation of images in multilingual sources (WP4 – Event-centric cross-lingual Information processing) | |||||

| Objectives: Research how surrounding text information can be utilized to infer and refine spatial and temporal information about an image in multilingual Web sources to support cross-lingual alignment. | |||||

Impact: Contextualization of news photos based on geolocation estimation and event type classification can impact the research field in various aspects such as:

|

|||||

Further research: Possible follow-up research directions are:

|

|||||

| ESR9: Daniela Major |  |

PhD enrolment: UoL | |||

| Project Title: National and transnational media coverage of European parliamentary elections, 2004-2014 (WP6 – Event-centric cross-lingual analytics and cross-cultural studies) | |||||

| Objectives: Explore information flows between national media, identify translingual concepts and topics emerging during the elections. | |||||

Impact: Research in Cleopatra has contributed to the following:

|

|||||

Further research: Possible future developments of the research include:

|

|||||

| ESR10: Caio Mello |  |

PhD enrolment: UoL | |||

| Project Title: Nationalism, internationalism and sporting identity: the London and Rio Olympics (WP6 – Event-centric cross-lingual analytics and cross-cultural studies) | |||||

| Objectives: Explore online discussion of the two recent Olympics, which took place on different continents, in different time zones, and in different linguistic contexts. | |||||

Impact: Research in Cleopatra has contributed to the following:

|

|||||

Further research: Possible future developments of this research include:

|

|||||

| ESR11: Abdul Sittar |  |

PhD enrolment: JSI | |||

| Project Title: Information propagation with barriers (WP6 – Event-centric cross-lingual analytics and cross-cultural studies) | |||||

| Objectives: Model the phenomenon of information propagation within the dynamic network of interconnected events. In other words, the objective is to model the characteristics of information spreading once a physical event happens somewhere in the world. | |||||

Impact: There are many factors that influence the news selection, reporting, and spreading such as cultural, political, economic, geographic, and linguistic. Analysing these factors in news spreading related to different international events is an open research area. The impact of the research project can be summarised as follows:

|

|||||

| Further research: The developed methodologies will be used for social media analysis within the new TWON project (Twin of online social networks). | |||||

| ESR12: Swati |  |

PhD enrolment: JSI | |||

| Project Title: Cross-lingual news reporting bias (WP6 – Event-centric cross-lingual analytics and cross-cultural studies) | |||||

| Objectives: Analyse cross-lingual news reporting bias along several dimensions: topic, language, geography, political orientation, source, sentiment, time, attention and some other contextual features. | |||||

| Impact: Introduction of a novel knowledge-infused learning framework for enhancing the prediction of political bias in news headlines and its extensive evaluation. We believe that our proposed framework would be a valuable tool for copy editors responsible for rewriting headlines. It also has the potential to be useful in practical applications such as e-journalism and manual news-bias prediction portals, where it could be used to automatically classify headlines into different bias types. In addition, it could help to reduce the number of articles that require manual examination, which is a time-consuming process prone to annotator bias.

|

|||||

Further research:

|

|||||

| ESR13: Anna Katrine Jørgensen |  |

PhD enrolment (2019-20): UvA | |||

| Project Title: Multilingual Wikipedia as ‘first draft of history’ (WP6 – Event-centric cross-lingual analytics and cross-cultural studies) | |||||

| Objectives: Perform cross-cultural comparison of Wikipedia language versions of articles on emerging news events and their temporal evolution. | |||||

| Expected Results: Identification of language-specific and community-specific differences across Wikipedia language editions with respect to coverage of emerging news events over time. | |||||

| Current events are popular and frequent pages on Wikipedia, with each language version offering a different representation of the events. These representations are sequences of temporally and culturally situated drafts of history that are continuously revised and repositioned by the Wikipedians. Despite their popularity, there is still a lack of research on how the current events representations are created, how they develop over time, and how they differ across languages versions.

In my PhD, I research the creation and development of current event pages on Wikipedia and perform large-scale temporal analysis of events across different European Wikipedia language versions. I specifically research events that have a social or cultural significance for the European Union or European cultures such as the European Refugee Crisis, the Arab Spring, and Brexit. I also work on cultural recommendation of page creations based on cultural and social relevance, preferences and importance, as well as the relationship between events, location and related entities. |

|||||

| ESR14: Diego Alves |  |

PhD enrolment: FFZG | |||

| Project Title: NLP for under-resourced languages (WP4 – Event-centric cross-lingual Information processing) | |||||

| Objectives: Extend Language Processing Pipelines (LPPs) for the well-resourced EU languages and gradually add new languages. | |||||

| Impact: The new typological methods that were proposed allowed to compare and classify languages in an innovative way providing valuable information for dependency parsing improvement. The optimized methods were applied for low-resourced European languages (i.e.: Lithuanian, Hungarian, Irish, and Maltese), thus, improving their scores concerning automatic syntactic annotation. A Speech-To-Text translation method from Latvian to English was proposed to improve the results via synthetisation of errors in the training phase; this solution allowed overall scores to be improved.As part of CLEOPATRA activities (RD Week and Hackathon), a new hierarchy regarding named-entity recognition (UNER) and classification was developed with a specific pipeline for extracting and annotating data from Wikipedia which can be applied for any language available in it. Constructive discussions while using the data available in one of the collections of Arquivo.pt, allowed the researchers from that institution to come up with new services to facilitate users of their resources. | |||||

| Further research: The typological methods which have been identified for a better understanding of linguistic phenomena when languages are combined to train dependency parsing models will be further improved, expanding the research for other linguistic families. Regarding the named-entity project, the idea is to continue on working in this domain to improve the automatic extraction and annotation in the first step, followed by the creation of UNER datasets for all EU languages | |||||

| ESR15: Gaurish Thakkar |  |

PhD enrolment: FFZG | |||

| Project Title: Cross-lingual sentiment detection (WP4 – Event-centric cross-lingual Information processing) | |||||

| Objectives: Produce and test a cross-lingual sentiment detection module with support for under-resourced EU languages. | |||||

Impact: Sentiment analysis for low-resourced languages is an under-researched area. The impact of the research carried out within Cleopatra in the field can be summarised as follows:

|

|||||

Further research: Ideas for follow up are:

|

|||||

| ESR17: Sahar Tahmasebi |  |

PhD enrolment: TIB | |||

| Project Title: Multi Modal Fact Validation (WP4 – Event-centric cross-lingual Information processing) | |||||

| Objectives: Develop methods for multimodal fact validation by exploiting hybrid inputs, provide evidence for them and learn rich representation. | |||||

| Impact: Research in this field provided a new approach for multimodal fake news detection with better generalisation capability on realistic use cases. | |||||

Further research: Possible follow-up research directions are:

|

|||||

| ESR18: Alberto Olivieri |  |

PhD enrolment (2022-3): UVA | |||

| Project Title: Cross Cultural Comparison of Russian State Controlled and Independent Media (WP6 – Event-centric cross-lingual analytics and cross-cultural studies) | |||||

| Objectives: Comparison of the images of the war/special military operation in Ukraine, and therefore on the underlying semantic message that is implicitly vectored by the actors involved. | |||||

| Expected Results: Insights on how state and independent actors develop, build, and spread narratives and on the visual semantics involved. | |||||

| The project wants to focus on the clashing of narratives in contemporary society, the influence of these narratives for society at large, and the semantics they use. Nowadays, we assist at ferocious debates regarding what is considered truth from opposite sides of a narration battle that we cannot ignore anymore. These narratives are such powerful tools that could shape so fundamentally the belief system of an individual and influence how it perceives reality. As such, we should try to understand better how they work in the digital age, with its new and evolving challenges. | |||||